AI productivity

Xembly: Designing Trust Between People and AI

Xembly: Designing Trust Between People and AI

Role: Director of Design Stage: Series A — $20M+ Domain: Enterprise AI productivity — scheduling, task tracking, meeting intelligence Outcome: User activation lifted from 18% to 29%

Role: Director of Design Stage: Series A — $20M+ Domain: Enterprise AI productivity — scheduling, task tracking, meeting intelligence Outcome: User activation lifted from 18% to 29%

Users were signing up and stalling. The AI was capable. The product wasn't earning trust fast enough to convert capability into adoption. I redesigned the core interaction model around transparency rather than automation, giving users visibility into the AI's reasoning before asking them to act on it. Activation moved from 18% to 29%. The design pattern is now how I think about any AI product where user trust is the real adoption barrier.

Users were signing up and stalling. The AI was capable. The product wasn't earning trust fast enough to convert capability into adoption. I redesigned the core interaction model around transparency rather than automation, giving users visibility into the AI's reasoning before asking them to act on it. Activation moved from 18% to 29%. The design pattern is now how I think about any AI product where user trust is the real adoption barrier.

The Real Problem Wasn't Productivity

The Real Problem Wasn't Productivity

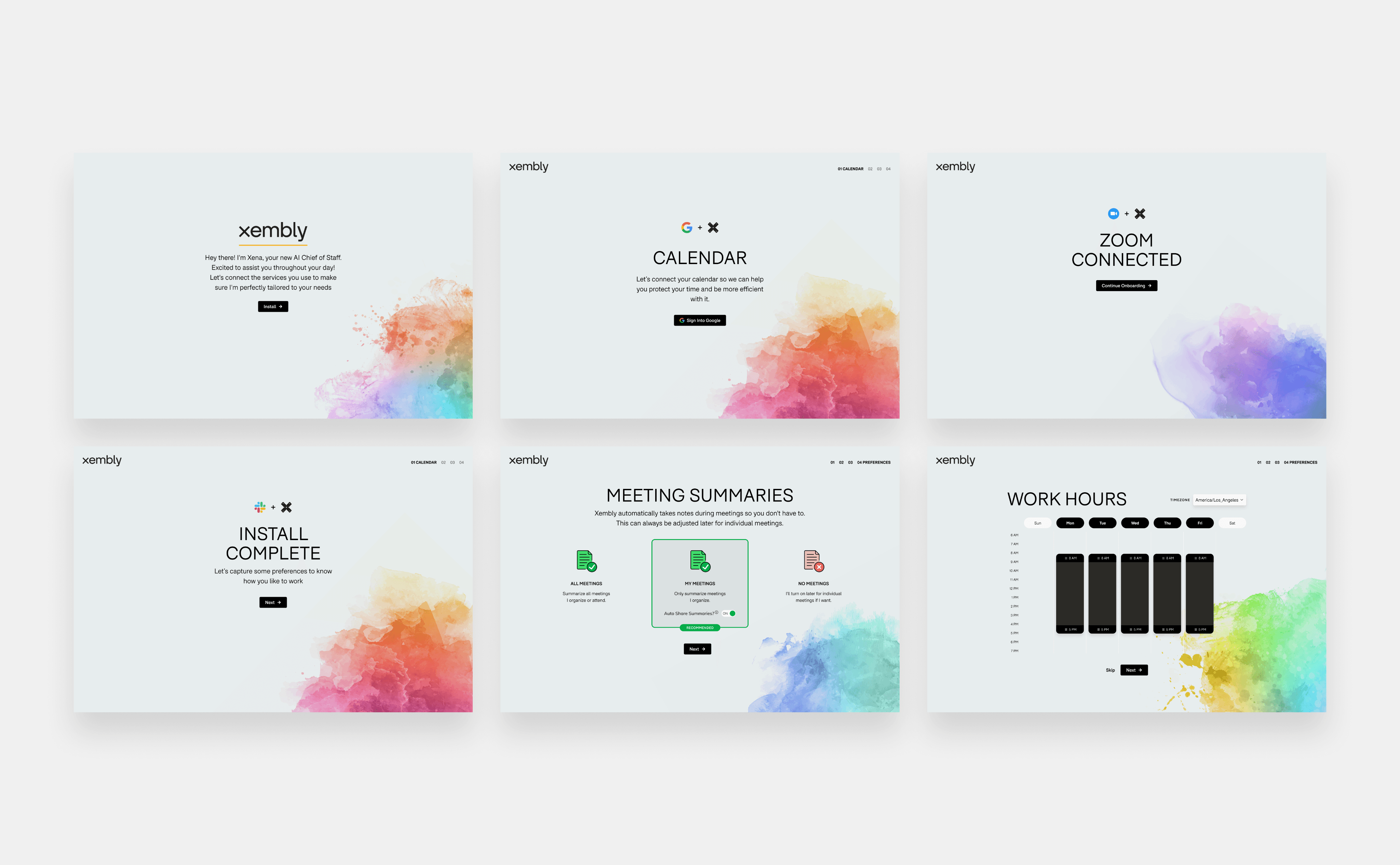

When I joined Xembly as Director of Design, the product already had a capable AI. Xena could read calendars, parse meeting notes, detect tasks, infer priority, and act on behalf of the user. Technically, it was impressive.

The problem was adoption. Activation was sitting at 18%. Users were signing up, seeing what Xena could do, and stopping there. They weren't letting her do anything.

That's a trust problem, not a features problem.

The core tension in designing any AI productivity tool is that the AI's usefulness scales with how much autonomy you give it. But autonomy requires trust. And trust, especially in a professional context where a scheduling mistake can derail a week or a missed task can cost a deal, is not given freely. It's earned incrementally, interaction by interaction.

The design question I needed to answer was: how do you build a UI that earns trust progressively, rather than demanding it upfront?

When I joined Xembly as Director of Design, the product already had a capable AI. Xena could read calendars, parse meeting notes, detect tasks, infer priority, and act on behalf of the user. Technically, it was impressive.

The problem was adoption. Activation was sitting at 18%. Users were signing up, seeing what Xena could do, and stopping there. They weren't letting her do anything.

That's a trust problem, not a features problem.

The core tension in designing any AI productivity tool is that the AI's usefulness scales with how much autonomy you give it. But autonomy requires trust. And trust, especially in a professional context where a scheduling mistake can derail a week or a missed task can cost a deal, is not given freely. It's earned incrementally, interaction by interaction.

The design question I needed to answer was: how do you build a UI that earns trust progressively, rather than demanding it upfront?

What I Built: The Transparency-First Interaction Model

What I Built: The Transparency-First Interaction Model

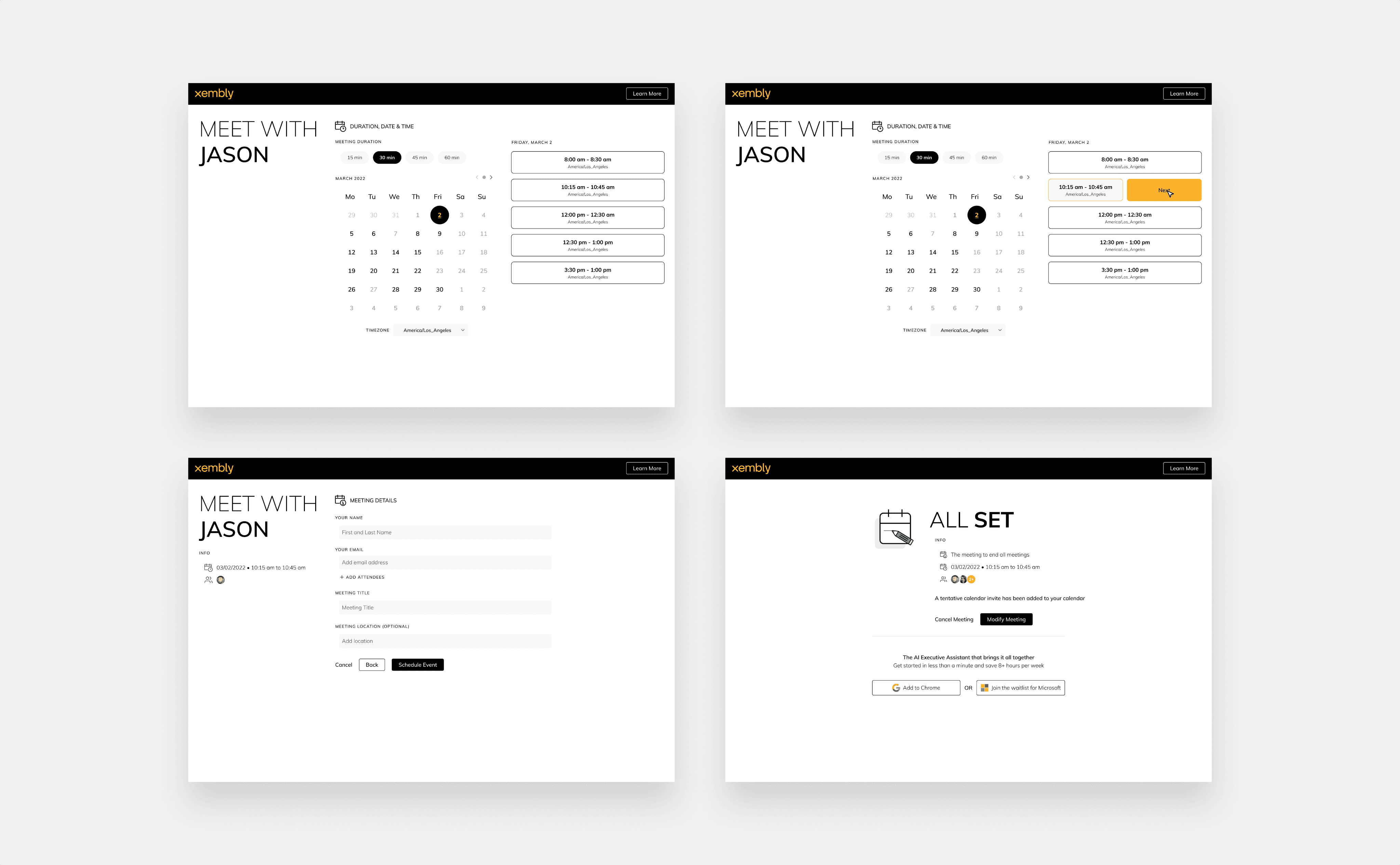

The dominant pattern for AI productivity tools at the time was to automate silently or to surface a single recommendation and ask for a yes/no. Both approaches fail for the same reason: they don't show their work. Users either don't know the AI acted, or they're asked to trust an output without understanding the input.

I replaced that model with a three-step transparency layer I designed around a single principle: Xena tells you what she saw before she asks what to do.

The dominant pattern for AI productivity tools at the time was to automate silently or to surface a single recommendation and ask for a yes/no. Both approaches fail for the same reason: they don't show their work. Users either don't know the AI acted, or they're asked to trust an output without understanding the input.

I replaced that model with a three-step transparency layer I designed around a single principle: Xena tells you what she saw before she asks what to do.

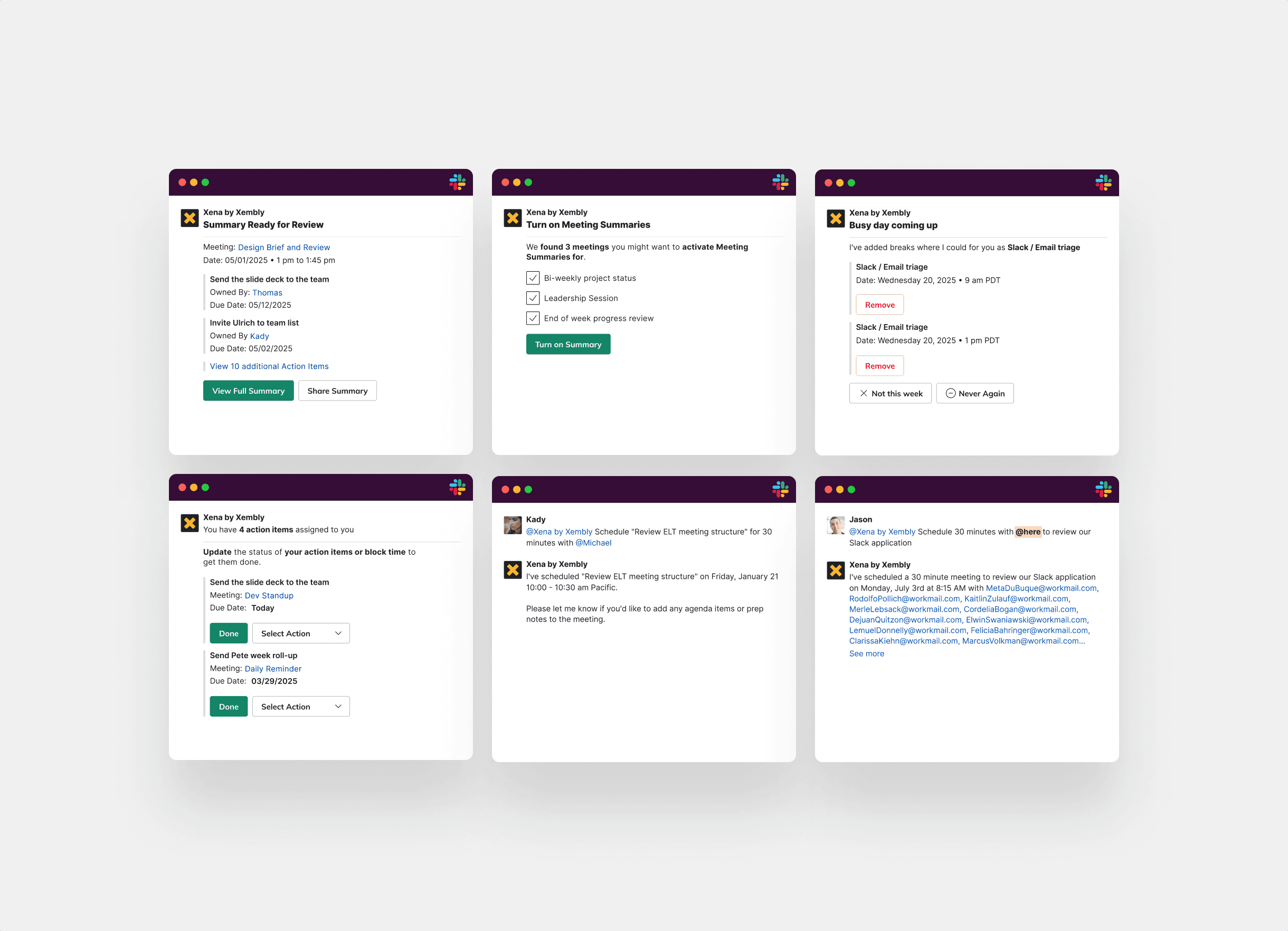

Every Xena action began with a brief, plain-language observation:

Every Xena action began with a brief, plain-language observation:

"I noticed you have a 90-minute block tomorrow that has been rescheduled twice. Your focus time patterns suggest mornings work better for deep work."

"I noticed you have a 90-minute block tomorrow that has been rescheduled twice. Your focus time patterns suggest mornings work better for deep work."

Then the user received exactly three options:

Leave it — do nothing, I'll handle this myself I'll do it — I want to take this action, but I'll make the call Go ahead — handle it, Xena

This was not a feature. It was the entire design philosophy of the product made visible.

The observation step was the critical piece. It externalized Xena's reasoning so users could calibrate whether her read on the situation was accurate. When users could see that Xena had understood the context correctly, they were far more likely to act on her suggestion. When her read was off, they caught it before any damage was done. Either way, they felt in control.

Over time, the three-option model did something more interesting than we expected.

Then the user received exactly three options:

Leave it — do nothing, I'll handle this myself I'll do it — I want to take this action, but I'll make the call Go ahead — handle it, Xena

This was not a feature. It was the entire design philosophy of the product made visible.

The observation step was the critical piece. It externalized Xena's reasoning so users could calibrate whether her read on the situation was accurate. When users could see that Xena had understood the context correctly, they were far more likely to act on her suggestion. When her read was off, they caught it before any damage was done. Either way, they felt in control.

Over time, the three-option model did something more interesting than we expected.

The Trust Progression We Didn't Fully Anticipate

The Trust Progression We Didn't Fully Anticipate

Early in onboarding, users consistently chose "I'll do it." They wanted the observation, the context surfacing, the signal — but they wanted to make the final move themselves. That was the right behavior. It meant they were engaging with Xena rather than ignoring her.

What we tracked over the following weeks was a natural graduation pattern. As users repeatedly found that Xena's observations were accurate, they began choosing "Go ahead" for a growing category of tasks. Not all tasks. The progression was domain-specific and personal.

A user might reach full automation on scheduling within three weeks but stay at "I'll do it" for task prioritization for months. Another user might delegate meeting note summaries immediately but never fully automate anything touching their direct reports' schedules.

The design implication was significant: the product needed to honor that graduation curve at the individual level, not the aggregate. Xena had to remember not just what a user had approved, but at what level of autonomy they'd approved it. We built preference memory into the interaction model so that "Go ahead" on a specific action type became the new default for that user, surfacing "Leave it" and "I'll do it" only when context changed significantly.

This turned activation into a growth mechanic. The longer a user stayed in the product, the more of Xena's capability they were actually using — not because we pushed features on them, but because they had built confidence through verified accuracy.

Early in onboarding, users consistently chose "I'll do it." They wanted the observation, the context surfacing, the signal — but they wanted to make the final move themselves. That was the right behavior. It meant they were engaging with Xena rather than ignoring her.

What we tracked over the following weeks was a natural graduation pattern. As users repeatedly found that Xena's observations were accurate, they began choosing "Go ahead" for a growing category of tasks. Not all tasks. The progression was domain-specific and personal.

A user might reach full automation on scheduling within three weeks but stay at "I'll do it" for task prioritization for months. Another user might delegate meeting note summaries immediately but never fully automate anything touching their direct reports' schedules.

The design implication was significant: the product needed to honor that graduation curve at the individual level, not the aggregate. Xena had to remember not just what a user had approved, but at what level of autonomy they'd approved it. We built preference memory into the interaction model so that "Go ahead" on a specific action type became the new default for that user, surfacing "Leave it" and "I'll do it" only when context changed significantly.

This turned activation into a growth mechanic. The longer a user stayed in the product, the more of Xena's capability they were actually using — not because we pushed features on them, but because they had built confidence through verified accuracy.

What Drove the Activation Lift

What Drove the Activation Lift

The jump from 18% to 29% activation wasn't a single design decision. It was the cumulative effect of replacing a trust-demanding interface with a trust-building one.

The observation layer reduced the perceived risk of AI action. Users stopped feeling like they were handing over control; they felt like they had a highly informed assistant surfacing what they would have had to notice themselves.

The three-option structure removed the binary pressure of yes/no. "I'll do it" gave users a middle path that kept them engaged without requiring full delegation. That middle path was where most users spent the first month — and that was fine. Active engagement with Xena's observations was itself a form of product adoption.

The preference memory meant the product got more valuable the more someone used it, rather than plateauing. Users who stayed four weeks were actively experiencing more of the product than users who stayed one week, and the qualitative feedback reflected that.

The jump from 18% to 29% activation wasn't a single design decision. It was the cumulative effect of replacing a trust-demanding interface with a trust-building one.

The observation layer reduced the perceived risk of AI action. Users stopped feeling like they were handing over control; they felt like they had a highly informed assistant surfacing what they would have had to notice themselves.

The three-option structure removed the binary pressure of yes/no. "I'll do it" gave users a middle path that kept them engaged without requiring full delegation. That middle path was where most users spent the first month — and that was fine. Active engagement with Xena's observations was itself a form of product adoption.

The preference memory meant the product got more valuable the more someone used it, rather than plateauing. Users who stayed four weeks were actively experiencing more of the product than users who stayed one week, and the qualitative feedback reflected that.

What This Taught Me About Designing for AI

What This Taught Me About Designing for AI

The lesson I carried out of Xembly is that the job of an AI product designer in 2026 is not to make the AI disappear. The dominant instinct — to create seamless, invisible automation — is the wrong instinct for most enterprise contexts. Invisibility reads as opacity. Opacity creates anxiety. Anxiety kills adoption.

The better goal is to make the AI's reasoning legible without making it burdensome. Show the observation. Give the user agency over the action. Let trust accumulate through repetition and accuracy.

An AI that explains what it noticed and asks what you want to do next will consistently outperform one that acts and informs. Not because users don't want automation — they do, deeply — but because they need to earn their own confidence in the system before they'll hand it the wheel.

The "Go ahead" moment, when a user first delegates a full action to Xena without reviewing the details, is not a UX event. It's a relationship milestone. Designing for that moment, rather than trying to skip to it, is what moved the activation number.

The lesson I carried out of Xembly is that the job of an AI product designer in 2026 is not to make the AI disappear. The dominant instinct — to create seamless, invisible automation — is the wrong instinct for most enterprise contexts. Invisibility reads as opacity. Opacity creates anxiety. Anxiety kills adoption.

The better goal is to make the AI's reasoning legible without making it burdensome. Show the observation. Give the user agency over the action. Let trust accumulate through repetition and accuracy.

An AI that explains what it noticed and asks what you want to do next will consistently outperform one that acts and informs. Not because users don't want automation — they do, deeply — but because they need to earn their own confidence in the system before they'll hand it the wheel.

The "Go ahead" moment, when a user first delegates a full action to Xena without reviewing the details, is not a UX event. It's a relationship milestone. Designing for that moment, rather than trying to skip to it, is what moved the activation number.

Result

Clients

Turing, Qualtrics, Netskope, Twilio, Docker, ServiceTitan, MNTN, Unearth

Turing, Qualtrics, Netskope, Twilio, Docker, ServiceTitan, MNTN, Unearth

Munch Citi

Mobile Application

Adriennial

UX/Strategy

Stabilitas

SaaS

Additional Work

Additional Work

Additional Work